This may not be ideal, but it is the easiest solution and in the worst case, we just waste a little bit of memory. To solve this issue, I simply ran each new scraper on a new custom process (using multiprocessing), this makes each new execution run separately from past executions.

#Aws lambda webscraper code

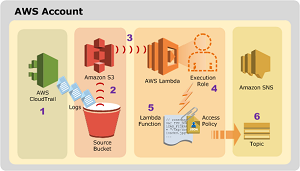

This is not a problem when running it on a server or a local machine, but this can be an issue when running code “serverlessly.” For example, if we run our Lambda function twice (with little time in between), AWS may re-use the container created for the first execution and this will generate an error because we attempted to restart the Twisted reactor. An important fact about Twisted reactors is that they cannot be restarted. Scrapy is a great web scraping framework that allows parallel requests, and to achieve this, it was built on top of Twisted and runs inside a Twisted reactor. To build and deploy our code, we just need to run: $ sam build -use-container $ sam deploy How the example code works Remember to include the pipeline scrapy_podcast_, yield one PodcastDataItem, and one PodcastEpisodeItem for each episode (this is also shown in the minimal example).Create a spider on podcast_scraper/spiders (or just try the minimal example which is already in the folder).Set OUTPUT_BUCKET on podcast_scraper/settings.py to the bucket you just created.This is where the podcast app will obtain the RSS feed. The docker image relies on serverless-chrome. Give your lambda function/role read & write permissions to S3 (through IAM). Set your Lambda root Handler to the go binary filename ( go-pdf-lambda in our example) Add an S3 trigger on all create events. It assumes, that you have AWS CDK and Docker installed. And on AWS: The lambda function name doesn’t matter, but it will need to be set to the Go 1.x runtime. Create an S3 bucket that has public read access. A web scraper running on AWS Lambda This is an example of a web scraper running on AWS Lambda and Lambda Layers.

15 minutes) is the maximum for AWS Lambda.

#Aws lambda webscraper install

To configure it and make it work, you will need to install AWS SAM CLI. To make it available for others, I wrote a minimal example that you can clone here So I decided that using AWS Lambda was the best alternative. A couple of weeks ago I wrote a sort of “podcast maker” web scraper (you can read about it here), and now I wanted to execute it regularly, for free, and without too many problems.